All new Registrations are manually reviewed and approved, so a short delay after registration may occur before your account becomes active.

Help me with my website and make me happy

I've tried everything. No Google tool can access my URL. Even the sitemap returns "Couldn't fetch". The Request Indexing returns "Indexing request rejected. During live testing, indexing issues were detected with the URL".

No error code or anything, just nothing works. It's a totally new domain so it has never been working before and stopped working. DNS is fully propagated though.

URL (trying to index): https:// hundforsakringarna () .se

Sitemap (trying to submit): https:// hundforsakringarna () .se/sitemap_index.xml

Robots.txt: https:// hundforsakringarna () .se/robots.txt

The VPS hosts 5-10 other sites that I’ve set up in exactly the same way and all of them works without any issues. I just can’t seem to figure this one out.

Comments

You can't have spaces in the URI.

See https://developer.mozilla.org/en-US/docs/Learn/Common_questions/What_is_a_URL for how to correctly write an URI.

Resubmit as

https://hundforsakringarna.seand it should work.Nope ;(

https://search.google.com/test/mobile-friendly?utm_source=gws&utm_medium=onebox&utm_campaign=suit&id=mx8MTL3v3ap3A6j2cY1EQA

I'm transferring the site from a VPS to a DirectAdmin Shared environment to see if it has anything to do with that. Will update in 5.

Now the site is a default WP installation on NEW host and the result is the same, Google can't crawl it.

Check if you have robot.txt file. Delete or rename it.

NVM, I missed it… Just ignore this comment.

Try this: https://www.godaddy.com/help/allow-search-engine-indexing-in-wordpress-27809

Select Post name on this page: WP-admin->Settings->permalinks

Done, has nothing to do with that though. Google can't even lookup the domain.

That's just for disabling the noindex tag in WP, it's disabled already. Google can't even lookup the domain really. Thanks for helping bud but that's not it.

Google bot will need some time to re index again.

Before this change, the robots and sitemap URLs did not work in my browser. They do now.

It was never enabled. You can see, live, how Google interprets the site using this tool: https://search.google.com/test/mobile-friendly. Dosen't work.

No, I transferred the site from the VPS to a web host and I didn't enable any plugins, since there wasn't a SEO plugin, these pages wasn't activated, so I installed Yoast SEO and now they're there again. They wasn't there for maybe 1-2 min.

It might be a DNS problem.

You don't have a DNS record for www.

Check if your txt record with google-site-verification is correct.

Other tools like: PageSpeed Insights and Web.dev are working fine.

So I think you should just wait.

delete the robots.txt and try again..

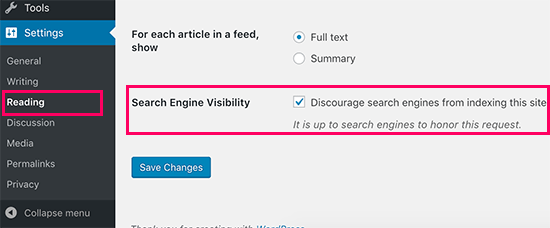

Also make sure the following is unchecked

Yoast SEO plugin settings -- see if you have settings that might influence bot crawl / indexing

Sadly nothing helped so far, and it’s not a matter of waiting. Google can’t aceess the URL at all, nothing is wrong with the web files, Google can’t even access them.

DNS change takes time to penetrate.

You can flush it manually - https://developers.google.com/speed/public-dns/cache

If it still doesn't work after sometime, try to disable cloudflare proxy and check.

It can't be a DNS/IP/etc issue as I can do a Google PageSpeed test on the site mentioned above, so google can connect to it.

From looking online the error you're getting would suggest google has previously had issues (search engine visibility off in WP, disallow rules in robots.txt for example) and is still using the old rules.

If you've not previously had this, check no plugins are blocking googles crawl bots, etc.

I'm not big up on SEO but might help.

Already did this.

Thing is Google can connect, but not using the tools using the same infrastructure as Googlebot (eg. The mobile test).

No plugins are blocking, removed eveything. This is a default WP installation with Yoast.

Well google can load a website which is using the same IP too.

Have you tried setting the webserver to force clients to retrieve files rather than use their cached version? This might make google get a new version if it's cached a previous robots.txt that was blocking it?

May be try disabling Cloudflare proxy, turn on Development mode and check? Could be it is serving stale/ cached content. With dev mode on, it should bypass request and hit your origin.

Read this: https://support.google.com/webmasters/answer/9044175

Thanks, no manual action.

There was never any robots.txt that was blocking access. However,

It might have been a CloudFlare error. CloudFlare blocking Googlebot for some reason. I’ve just now changed nameservers to my registrars default ones instead and once they’ve propogated I’ll see if Google can access the website.

Thanks everyone for the tips so far!

Ah I checked the IP but it didn't look like a Cloudflare one.

So I:

Removed everything, installed Wordpress fresh on a apache web host instead of my Nginx VPS

Changed nameservers from Cloudflare to my registrars

Still the same issue. Google is blocking the domain for some reason..

Btw yes the DNS is done for the Googlebot: https://toolbox.googleapps.com/apps/dig/#NS/

Google recognizes the new NS records. It still dosen't work though.

Google is waiting on your push-ups.

Do you have DKIM keys? If there's a problem (and switching nameservers might do that), Google ignores you with similar symptoms (other providers work, just not Google). You can use 8.8.8.8 to lookup your domain and confirm against 1.1.1.1 response.

atm you have no A DNS entry for domain.