New on LowEndTalk? Please Register and read our Community Rules.

All new Registrations are manually reviewed and approved, so a short delay after registration may occur before your account becomes active.

All new Registrations are manually reviewed and approved, so a short delay after registration may occur before your account becomes active.

VirMach down?

Anyone else having trouble accessing their VirMach VPS? I have three active VPSes with them, two in Buffalo and one in LA. The one in LA (on node LAKVM8) and one of the Buffalo ones (on node NY10GKVM73) are working fine, while the other one on node NY10GKVM74 is inaccessible - not responding to pings, VNC in SolusVM times out as well.

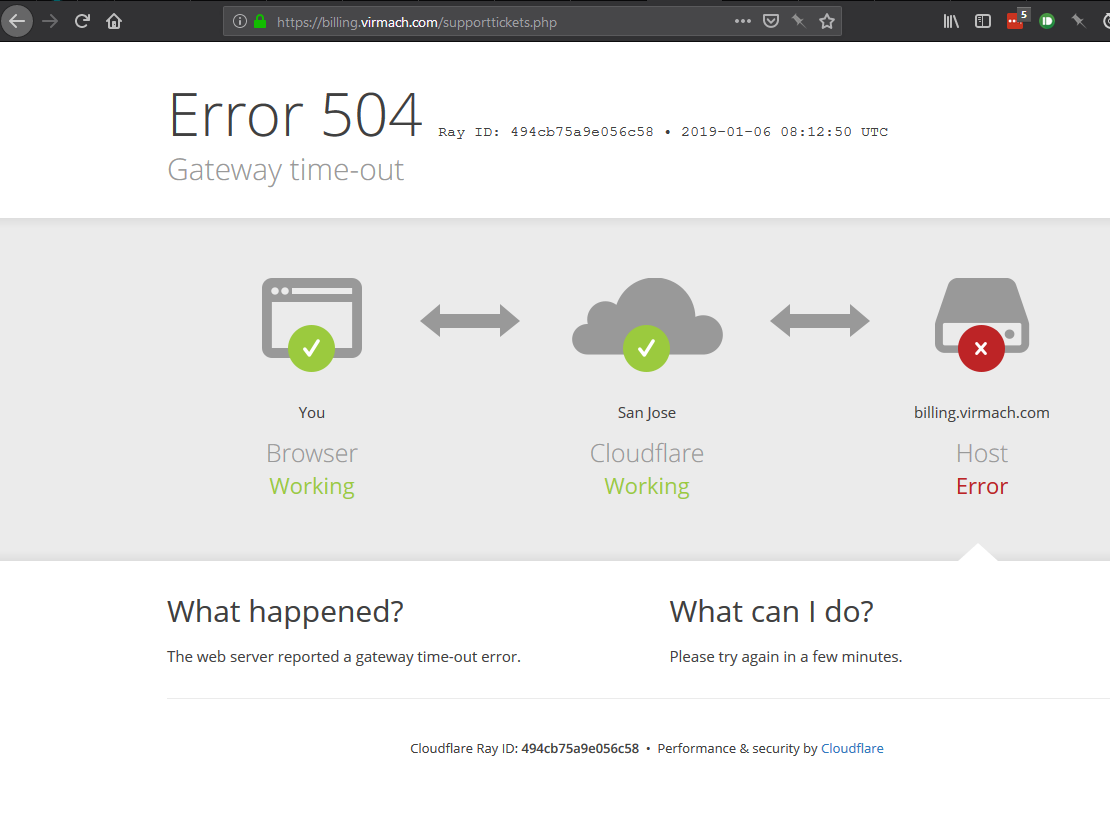

Their support ticket system is also down (Error 504 Gateway time-out from Cloudflare - maybe WHMCS is trying to pull info from the VPS node that's down) so I can't even open a ticket about it.

Comments

I have one in NY10GKVM79 working fine. And their billing panel doesn't seem to have any error (i didn't open a ticket so I can't confirm if their ticket system is down though I can view my tickets without any problems...).

@VirMach ??

Nothing on their network status about this node. And I am able to open ticket system just fine.

Strange... This is what I'm seeing when I access their ticket system:

You should hide your IP.

Directly hitting the submit ticket page (https://billing.virmach.com/submitticket.php) works for me, it's just the ticket listing page that's timing out. Oh well. Submitted a ticket.

Thanks. Edited.

All my buffalo are currently present and accounted for (don't have anything on NY10GKVM74 to check though).

Looks like it's back now. SSH'd in and my VPS was not rebooted, so I guess it was a network outage. syslog confirms that, as it was having trouble hitting the NTP servers:

@VirMach, please don't let your server down

That is cute

That can happen when the VPS is assigned to the wrong solusvm server in whmcs, causes all sorts of issues. I assume you only get the issue when you try to open the ticket and for around 30 minutes after.

Try opening a ticket but do not select a service.

You're a font of whmcs knowledge Mr Smith.

Response from support:

They have now updated network status page.

I'm amazed they replied so quickly, particularly so late at night in the USA. I was expecting to have to wait until tomorrow before being able to access my VPS again.

All my virmach vps are up.

Their support is top notch. Twice I opened a ticket to install Windows and they did it in 10 minutes.

You can check it here if you can't access your service:

https://billing.virmach.com/serverstatus.php

Nope. Wrong, twice.

1 - If the VPS is assigned to the wrong node it wont cause any issues. It will even load the info just file as WHMCS loads the vserverid. Only the HTML5 VNC may fail. It would never cause issues to the VPS network.

2 - The ticket fails to send if you have a tab open as an admin with a VPS that isnt responding so the PHP thread will lock for a few minutes. Eg restarting php in the admin end fixes this but its obviously not doable. So you can access the website faster if you open it in incognito mode and avoid visiting the page that shows the VPS SolusVM menu/info.

In this case he was able to open other pages so it wasnt a PHP thread lock, was just a timeout caused by a busy database, failing to send emails or something else.

You were close though

@MikePT oh sorry that issue I have suffered about 50 times must all be in my head... not the wrong NODE the wrong solusvm server i.e. master the server field not the nodeid field.

You are wrong about the veserverid, it also uses the server field, when you have multiple masters you have plenty of duplicate vserverid's

But I am sure you had fun writing that anyway so not a total loss.

Why do you run multiple Masters? And whats your setup like? What I mention does happen. And hasnt happened to me 50 times, but more like 5000+. How can you even setup multiple Masters?

Read above tho. Test a kvm. Vserverid only that is nedeed in the product details page. Then for html5 vnc to work from whmcs, the nodeid is needed.

How can you setup multiple masters ? What kind of question is that?

what tangent are you off on now, I have been running solusvm based backends for 9 years now, I also manage them for multiple other people, multiple masters is supported and very common, when however you make an error and select the wrong server in the module when creating a new product it causes exactly the issue I described.

Why you would let it happen 5000 times without just fixing the product is beyond me.

Ok delete the server details under configservers.php in solusvm but leave the vserverid, see how far you get lol.

Huh you arent getting it. I assume.

Can you explain me why you are running multiple SolusVM masters?

And how do you connect those to each other?

I am going to choose not to get in to that because it literally has nothing to do with this thread.

But it really is quite simple and exactly as you would expect.

It just doesnt make sense to run multiple Masters. Nor it ever happened to create an existing vserverid. Its an incremental value. If you are running several masters then you are running multiple SolusVM backends and dbs, however you cannot connect those to each other.

Edit didnt clarify. Meant in solusvm to solusvm!

/end

I suppose I and many others have been doing it wrong for years then... thanks.

I and many others have been using solusvm since before nodeid was even a thing

It makes a lot of sense and there are plenty of very good reasons to do so in terms of enhancing end user experience, I am sure if you give it a few hours thought it will come to you.

Feel free to PM me and I will explain you why I said this. 👍

I don't think I will engage in an educating mike session thanks

All I did was offer a possible explination to the OP showing its probably not a major issue (turned out not to be the case) you decided due to your limited understanding that you wanted to correct me while not understanding what I was suggesting and now you want to question the way I run my environment?

it is just strange man, get back on your medication.

Virmach down again?!

My KVM node (Buffalo) seems to be affected too.

It's still up but damn slow I cant even connect via SSH.

Someone else notice something?